1. Introduction

•

The Policy Gradient Algorithm optimizes a policy distribution by updating network parameters

◦

Applicable even to continous action space(with few restirctions on the action space)

•

Unlike DQN, when input states are given, the network outputs a probability distribution over possible actions based on parameter

◦

From this distribution, an action is stochastically selected

•

The model is trained to maximize the expected return

2. Policy Gradient computation

•

Objective Function

◦

The expected return itself is used as the objective function

◦

▪

: Reward

▪

: Discount factor

▪

: Trajectory generated by policy

▪

: The PDF of the trajectory generated by the policy

•

The policy is parameterized by

•

expansion

•

transition probability is independent of the policy → Delete index

◦

•

By the Markov property

◦

◦

•

Express the objective function using the state-value function.

◦

Express using the definition of expectation

▪

▪

•

Express using logarithmic differentiation

◦

•

•

Adjust the reward to be consistent with its definition.

•

Update using gradient ascent

◦

•

Introduce the Action value function

◦

◦

: Markov property

◦

•

Introduce the Baseline

◦

Replace the Q-function with an arbitrary function that is independent of the action.

◦

◦

Therefore, subtracting any baseline that is independent of the action from the Q-function does not affect the overall value of the equation.

◦

◦

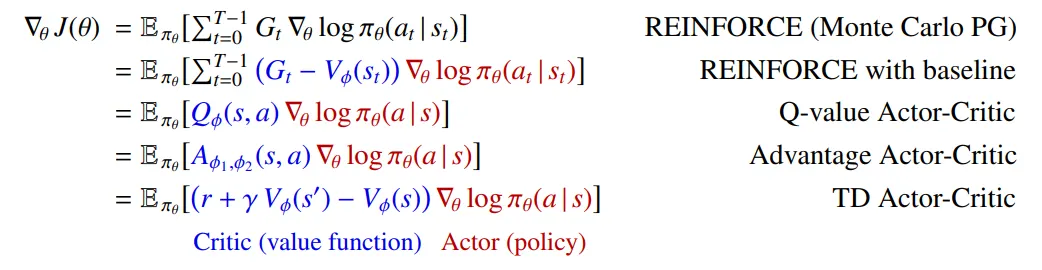

3. Summary

As a result, examining the form of each gradient shows that it consists of a product of two terms: one containing information about the direction in which the parameters should be optimized (), and another indicating how much the parameters should be updated in that direction. In practice, since it is difficult to directly compute the expectation over the entire trajectory, the sample mean is used as an approximation. The summation symbol () is omitted because, in many cases, online reinforcement learning updates the parameters immediately based on data generated from a single step.