1. REINFORCE

•

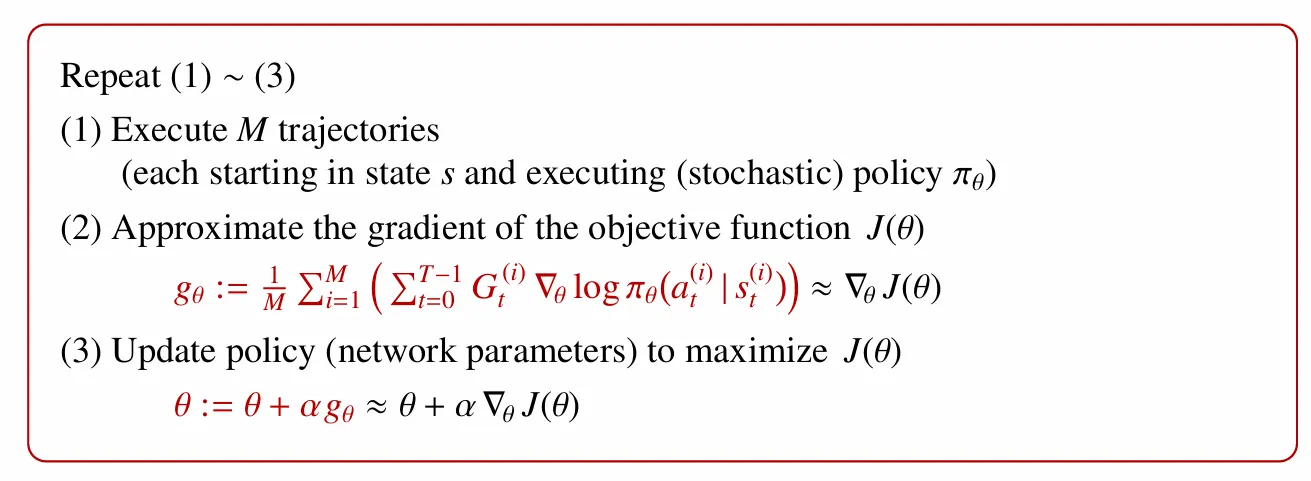

Among the various approximate forms of the policy gradient discussed earlier, this corresponds to the REINFORCE algorithm, which uses the return.

1.

Generate trajectories based on current parameter

2.

Compute Return for each episode

3.

Approximate using the sample mean

4.

Since the objective function is defined as the expected return over the entire trajectory, apply gradient ascent to update the parameters.

•

Disadvantages of REINFORCE

◦

The policy can only be updated after a full episode has finished

◦

Since the gradient is proportional to the return, it exhibits high variance.

◦

On-policy method

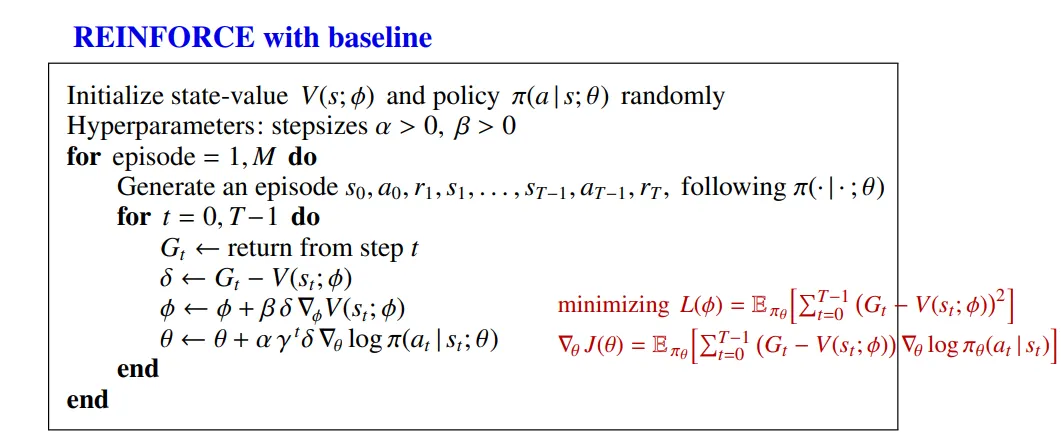

2. REINFORCE with baseline

•

To reduce the variance, a baseline is introduced

•

If this baseline is a function independent of the action, then, as proven earlier, it does not effect the expectation of the gradient.

1.

Generate an episode based on the current policy parameter .

2.

Compute the return for each time step.

3.

Use the computed return as the target and apply gradient descent to update the parameters of the baseline (state-value function).

4.

Apply the gradient ascent method to update the policy parameters.

5.

After updating with one episode, repeat the process for newly generated episodes.

•

is approximated using the sample mean, and the update is performed in an online manner — that is, the policy is updated immediately using data obtained from each 1-step interaction.